What Is Blue/Green Deployment in AWS?

A blue/green software deployment strategy involves deploying two versions of an application in parallel to ensure availability and reduce risks during a deployment:

- Blue—an environment that runs the existing application version.

- Green—an environment that runs the new application version.

The blue/green deployment pattern lets you launch the green environment, direct live traffic to it, and see if everything is working well. If there are issues, you can easily switch traffic back to the blue environment. If it looks good, the green version becomes the new production environment.

Amazon Web Services (AWS) supports the blue/green pattern, providing several ways to implement blue/green deployment for applications running on AWS:

- Amazon ECS on Fargate with CodeDeploy—you can use CodeDeploy to push a new version of an application, have the new version automatically deployed on Fargate in a serverless model, and switch over traffic using a load balancer.

- Amazon EKS with Argo Rollouts—you can leverage Argo Rollouts, an open source project that enables progressive delivery, to automate a blue/green deployment process in Elastic Kubernetes Service (EKS).

- Amazon EC2 with Elastic Beanstalk—Amazon provides a CloudFormation template which you can use in your Amazon account to perform blue/green deployments. This template creates a new version of your application using Elastic Beanstalk, waits for manual approval, and then diverts traffic to it using Lambda functions.

The above are three patterns recommended by Amazon, with supporting documentation. But of course there are many other methods and tools you can use to run blue/green deployments on AWS.

Codefresh is a Kubernetes-native deployment solution supporting blue/green deployments, designed to simplify the continuous integration and delivery of applications.

Related content: Read our guide to blue/green deployment

3 Ways to Implement Blue/Green Deployment Using AWS Services

There are many ways to run blue/green deployments in Amazon. Here are three possibilities, using Amazon ECS, Amazon EKS, and Amazon EC2.

Amazon ECS on Fargate with CodeDeploy

Amazon Elastic Container Service (Amazon ECS) is a cloud-based container management service. It provides capabilities for running, stopping, and managing containers on a cluster, allowing you to automate containers using tasks. ECS defines “services”, which can automatically run one or more tasks/containers.

Here is the general process for creating an ECS service with a blue/green deployment via the CLI:

- Create an ECS cluster and task definition for the application, which will run on Amazon Fargate.

- Define a service that represents the application for you which you want to perform blue/green deployment.

- Create a load balancer. To enable blue/green deployment you should use either an Application Load Balancer or a Network Load Balancer. Amazon recommends specifying two subnets from different Availability Zones

- Create a load balancer target group that represents the original task set (the blue version), and create a listener to forward requests to the blue version.

- Create an AWS CodeDeploy application for your application code, specifying ECS as the compute option for the application.

- Create another load balancer target group, which will be used for the CodeDeploy deployment group (this will be your green version).

- In CodeDeploy, create a deployment group, and connect it to the second load balancer target group.

- Upload an application specification to CodeDeploy, and create a deployment.

- Use the CodeDeploy

get-deployment-targetcommand and specify the ID of the ECS service you defined earlier. This will automatically deploy the “green” version of your application and switch traffic over to it.

See the full tutorial from Amazon. Amazon ECS has recently added a Blue/Green deployment option to the Create Service wizard in the ECS console, so you can do the same thing using a visual UI.

Amazon EKS with Argo Rollouts

Amazon Elastic Kubernetes Service (Amazon EKS) is a managed service for running Kubernetes on AWS. EKS installs, operates, and maintains the Kubernetes control plane on your behalf. It can automatically scale the control plane if necessary, as well as detect and replace unhealthy control plane instances. You can flexibly run Kubernetes nodes using Amazon EC2 or Fargate.

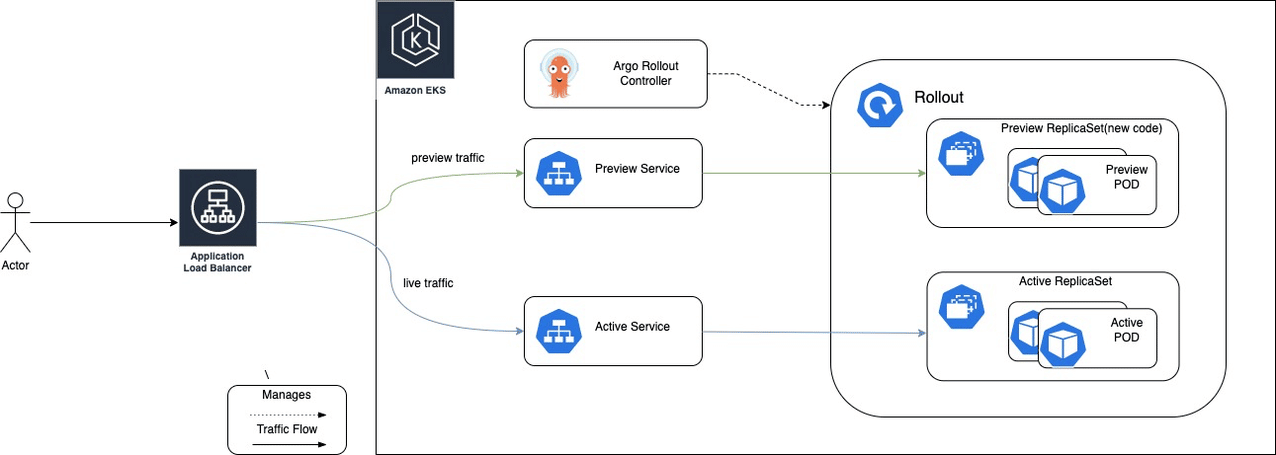

In a recent blog post, Amazon suggested the following process for running blue/green deployment with EKS and Argo Rollouts:

- Create an EKS cluster.

- Set up an Application Load Balancer to control traffic to your EKS cluster.

- Install the Argo Rollout Controller in your EKS cluster. Argo Rollouts is an open source project that enables progressive delivery in Kubernetes clusters.

- Create a new deployment using the

Rolloutobject (similar to the KubernetesDeploymentobject), selecting blue/green as the deployment strategy. - The Rollout object first deploys the existing version of your application. Then, when you have a new version ready, you can update the pod templates in the

Rollout(like you would do with aDeployment). The new version is deployed to a separate ReplicaSet, as shown in the diagram below.

Source AWS

- QA teams can now test the new deployment, which is currently not exposed to users.

- If the new version is working properly, you can switch to the new version by running the

argo rollouts promotecommand.

Learn more in our detailed guides about:

Amazon EC2 with Elastic Beanstalk

Amazon Elastic Compute Cloud (Amazon EC2) offers scalable computing capacity in the AWS cloud. Amazon Elastic Beanstalk is a service that lets you deploy and scale web applications on servers like Apache and NGINX. You upload your application code and Elastic Beanstalk automatically handles resource provisioning, deployment, load balancing, and auto-scaling.

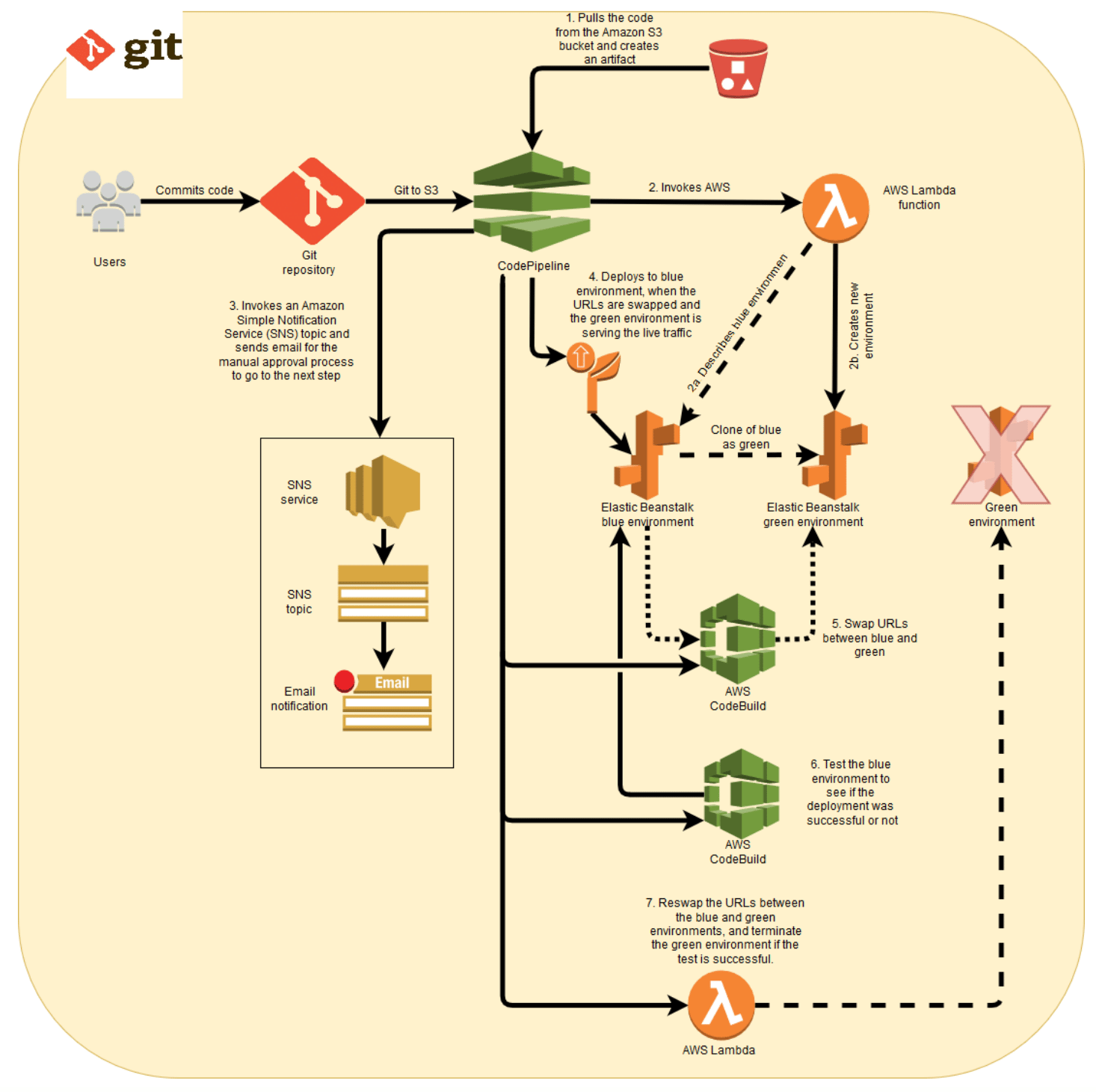

Amazon provides a blue/green reference deployment, which you can run automatically in your AWS account as a CloudFormation template. It works as follows (this process assumes an existing Elastic Beanstalk environment that serves as the blue version):

- Amazon CodePipeline pulls a new version of the software from a source S3 bucket and serves it to the Elastic Beanstalk environment.

- A Lambda function is triggered that clones the blue environment, creating a green environment.

- An email is sent to the operator for manual approval of the new version.

- Upon approval, CodeBuild switches the URLs between the blue and green environments. Now the green environment serves live traffic.

- AWS CodeBuild deploys the new version to the blue environment.

- If the deployment is successful, tests run on the blue environment to see if it returns a 200 (OK) status code. Otherwise, the pipeline doesn’t proceed, and treats the deployment as failed.

- If the test was successful, another Lambda function is triggered, switching the URLs again between the blue and green environments and terminating the green environment.

- Finally, the blue environment is given its initial Elastic Beanstalk for serving live traffic.

Image source: AWS

Common Ways to Distribute Traffic for Blue/Green Deployments

Blue/green deployments require a way to distribute traffic between the current (blue) and new (green) version. Here are a few technical options provided by AWS which can help you dynamically distribute traffic.

Amazon Route 53

Amazon Route 53 is a highly available, scalable, resilient DNS service that routes user requests for Internet-based resources to the correct destination. It operates on a global network of DNS servers and provides customers with additional features such as health checks, geolocation, and latency-based routing.

DNS is a common method for blue/green deployments. It can be used to forward traffic from one software version to another by updating DNS records in a hosted zone. You can adjust time to live (TTL) according to the urgency of the deployment—lower TTL values will allow faster propagation of DNS changes to clients.

Elastic Load Balancing

Another common way to route blue/green distribution traffic is to use load balancing techniques. Amazon Elastic Load Balancing (ELB) distributes incoming application traffic to specified Amazon Elastic Compute Cloud (Amazon EC2) instances. ELB scales based on incoming requests, performs health checks on Amazon EC2 resources, and integrates with other services such as EC2 Auto Scaling.

Other Load Balancers and Service Meshes

Three other options that can be used to implement a blue/green strategy are service mesh NGINX.

- Istio is a popular, open source service mesh technology that enables developers to connect, secure, control, monitor and run distributed microservices architectures regardless of platform, origin or vendor. Istio manages service interactions between containers and virtual machine (VM) based workloads. It can be used to route traffic between blue and green versions of a software application using flexible rules.

- NGINX is an open source web server that also runs reverse proxy, load balancing, email proxy and HTTP caching services. You can use the NGINX load balancer to distribute traffic between blue and green versions during a deployment. Another option is to use the NGINX ingress controller for Kubernetes to route external clients directly to the required version of the application.

- LinkerD provides a traffic split functionality that makes it possible to shift a portion of traffic from a Kubernetes service to a separate destination service. It can be used to implement blue/green deployment, gradually shifting traffic from an old version of a service to a new version. LinkerD provides this functionality via the Service Mesh Interface (SMI) TrafficSplit API.

AWS Blue/Green Deployment with Codefresh

Codefresh supports blue/green deployments in AWS natively using Argo Rollouts and the integrated Argo Rollouts Dashboard.

See blue/green deployment for more information.

The World’s Most Modern CI/CD Platform

A next generation CI/CD platform designed for cloud-native applications, offering dynamic builds, progressive delivery, and much more.

Check It Out