Argo CD has been skyrocketing in popularity with the CNCF China survey naming Argo as a top CI/CD tool for its power as a deployment automation tool. And it’s no wonder, GitOps is a faster, safer, and more scalable way to do continuous delivery. Most of our own users are embracing GitOps to manage infrastructure and applications at scale in gaming, finance, defense, media, and other industries. “GitOps” leverages git, infrastructure as code, and a closed reconciliation loop to take the guesswork out of deployments and make engineering/ops teams more productive.

New users to Argo CD and GitOps often struggle to know how to set up their Git repos, how to install and manage Argo CD itself in a “GitOps-friendly” way, and how to handle flows like promoting applications across environments. We wanted a clear, opinionated way to use it, add applications, and simplify the process of promoting changes across environments.

That’s why Codefresh engineers created Argo CD Autopilot! Argo CD Autopilot is a friendly command-line tool for bootstrapping Argo CD onto clusters, setting up your git repos, managing applications across environments, and even simplifying disaster recovery. You can easily set up one-off environments, or staging environments and promote changes across environments in a straightforward, GitOps powered way. It also works well for handling rollouts across regions in a controlled manner. Let’s jump in to show you how!

Try it out

Prerequisites

- 2x Kubernetes clusters (in this example one will be named prod and the other staging)

- Standard CLIs (Kubectl, Argo CD, Git)

Install Argo CD Autopilot CLI

Follow the instructions here to install the CLI. Then run argocd-autopilot version to make sure it’s working properly.

We’re going to install Argo CD on the prod cluster and then connect the staging cluster to that instance to manage updates from a single place. A connection directly to the cluster is only required during setup. During normal operation no external cluster access is expected. You can check out my finished repo here.

Setup Git

Remember, Argo CD Autopilot is going to bootstrap a repo with the Argo configuration, install Argo CD on a Kubernetes cluster, and link the instance to the git repo to watch for new applications and any changes so we’re going to need both git and Kubernetes access. If you don’t normally use git you may need to set an author.

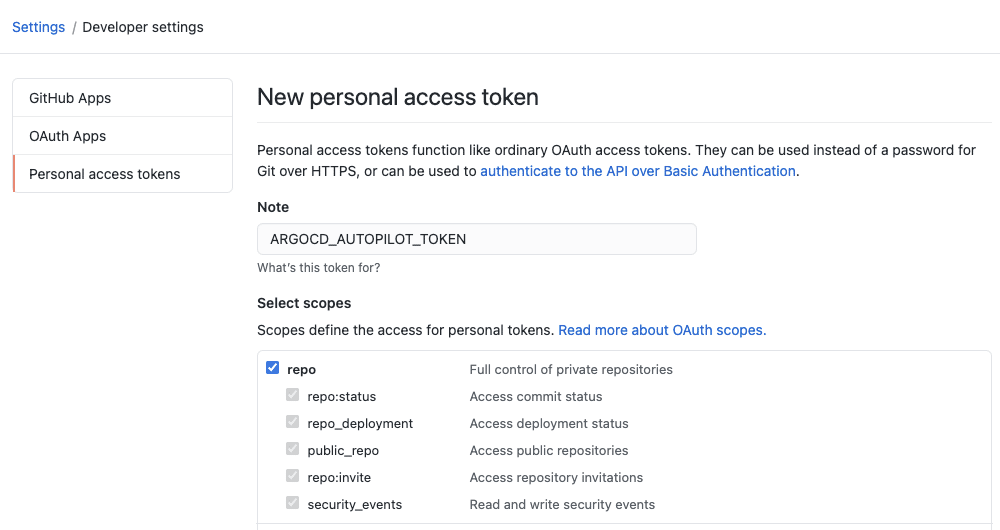

Generate a git personal access token on Github and make sure you scope the permissions to allow it access to create git repos.

Don’t worry about what directory you’re working in, we’re not going to make any changes to the local filesystem here.

export GIT_TOKEN=ghp_PcZ...IP0

For our example, we’re going to create a new git repo on Github. Specify the name of the git repo you want. In my case, my Github username is todaywasawesome so I would do GIT_REPO=https://github.com/todaywasawesome/autopilotdemo. Of course, you can change the repo name however you like.

argocd-autopilot repo create --owner [your git username] --name autopilotdemo

The output will show the full path of the Git repo that has been created. We’ll add that as a variable for later use.

export GIT_REPO=https://github.com/[your git username]/autopilotdemo

Bootstrap Argo CD and Add it to Your Repo

Make sure your Kubernetes context is set to the cluster you’d like to use. In this case, we’re going to install it to the prod cluster.

kubectl config current-context

Then bootstrap Argo CD. This will use the GIT_TOKEN and GIT_REPO we set earlier. They can alternatively be set with –git-token and –repo .

argocd-autopilot repo bootstrap

This will create the directory structure to hold the Argo CD application and the applications we’ll add later. When this command finishes you will see a password randomly generated from Argo CD.

By default, Argo CD does not expose its API. As of v0.1.5 the bootstrap command also creates a local port forwarding rule which will allow us to access the API without further configuration.

Create a new project for prod

Since we’re using our prod cluster for installation we’ll start with that project. By default the project create command will use the cluster ArgoCD is installed on as the default deployment target.

argocd-autopilot project create prod

Create a new app for prod

This will take our application repo and generate a Kustomize overlay in our repo and trigger an Argo CD sync to deploy it. The -p flag is used to specify the project we just created.

argocd-autopilot app create demoapp --app github.com/argoproj-labs/argocd-autopilot/examples/demo-app/ -p prod

Now let’s set up our staging cluster.

Create and deploy our app to staging

First, we’ll create a new project for staging. Each environment will get its own project. The –dest-kube-context will be used to specify the cluster we want to deploy to. Remember you can use kubectl config get-contexts to view the available clusters you have connected to locally. Specify the local name used there for your staging cluster.

We’ll also specify how the port-forwarding should work. Earlier we actually set up a default port-forwarding rule for accessing the Argo CD API but for the sake of avoiding errors, we’ll set it explicitly again.

argocd-autopilot project create staging --dest-kube-context [Local name for the Kubernetes cluster you want to deploy to] --port-forward --port-forward-namespace argocd

And finally, we can deploy our app to staging.

argocd-autopilot app create demoapp --app github.com/argoproj-labs/argocd-autopilot/examples/demo-app/ -p staging

Because we used the same name for the app we should have a directory tree like this in our git repo.

kustomize/demoapp

├── base

│ └── kustomization.yaml

└── overlays

├── prod

│ ├── config.json

│ └── kustomization.yaml

└── staging

├── config.json

└── kustomization.yaml

Make a change in staging and promote it to production

If you’re not familiar with Kustomize, it’s a way of templating and adding variables to Kubernetes manifests. We’re going to use it to modify the base application with a new image.

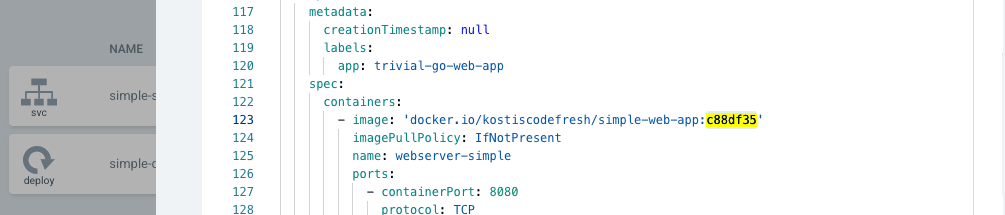

Edit kustomize/demoapp/overlays/staging/kustomization.yaml to add the new image we’d like to deploy. It should look like this:

apiVersion: kustomize.config.k8s.io/v1beta1 kind: Kustomization resources: - ../../base images: - name: docker.io/kostiscodefresh/simple-web-app newName: docker.io/kostiscodefresh/simple-web-app newTag: c88df35

Commit and push your changes back to your main branch and you’ll see staging automatically update. You can double-check the image tags are now different between the two environments in the Argo CD UI.

Now to promote the change we just copy them to our production overlay. This same pattern works for updating replicas, images, and really any other change you could make to your application!

Review Your Work

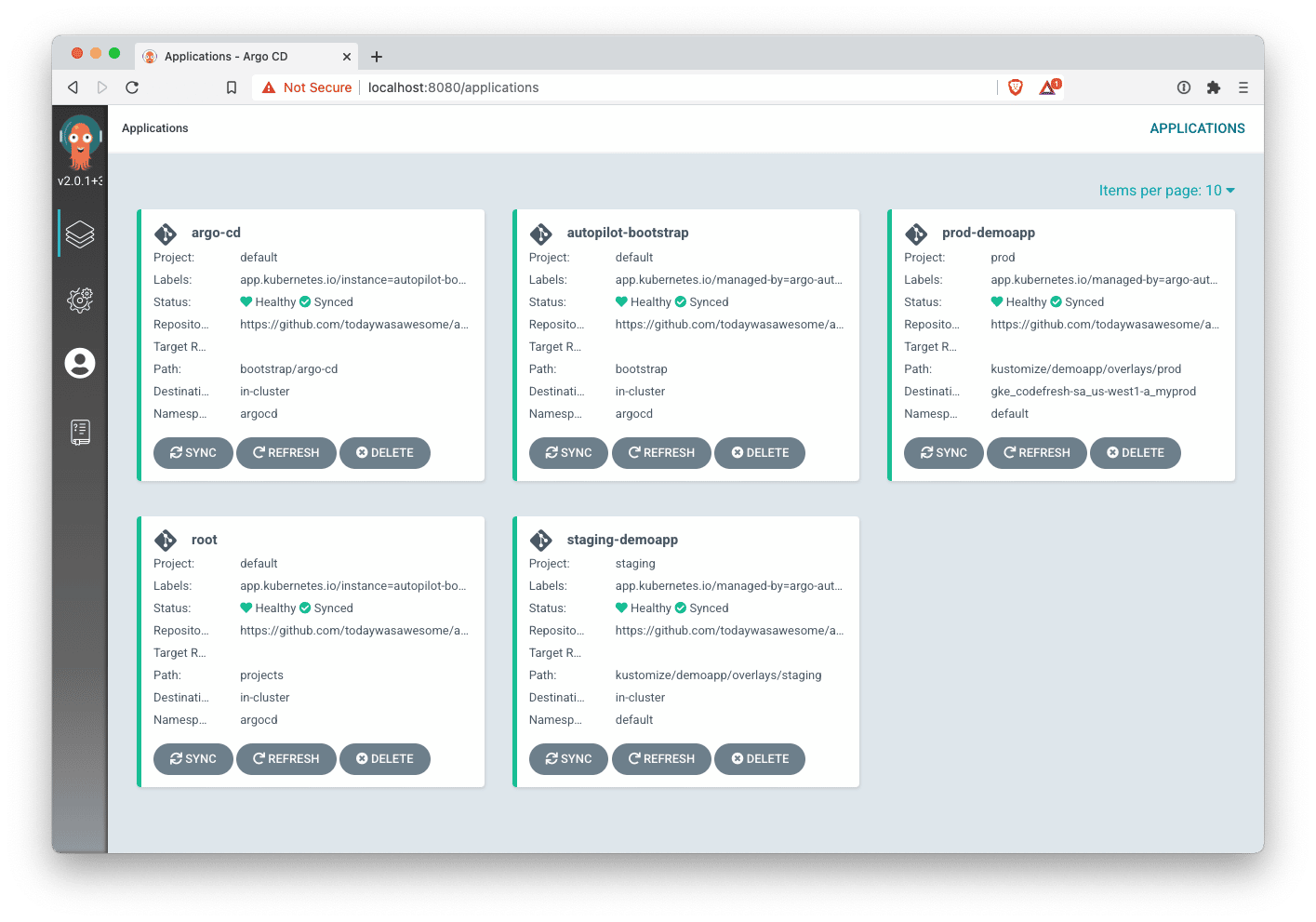

It may take up to 3 minutes for everything to sync but you should see something very similar to this in your Argo CD UI. You can read all the methods for accessing the UI here but it’s easy enough just to run kubectl port-forward svc/argocd-server -n argocd 8080:443

If you still don’t see the changes click “refresh” on the root application, this will bypass the default 3-minute timer.

We’ve successfully created projects for staging and production , set up our application, and promoted a change through the two environments!

Conclusion

Many of the elements of GitOps are not new, infrastructure as code, removing toil, even a closed reconciliation loop are ideas or practices that have been around for a decade or more. But the GitOps revolution isn’t about inventing something entirely new, it’s about making the best practices used by the best ops teams in the world completely accessible. Henry Ford didn’t invent the car, but he did make the car accessible to just about everyone. Argo CD Autopilot is the same, a streamlined, simple way to manage and deploy applications, updates, and environments at scale. A simple pattern that can be repeated by a team of 1 or a team of 10,000.

We hope this first release of Autopilot will help your teams move faster, spend less time on building the tools you need to release code, and more time actually releasing code and doing it with confidence. This project is under active development, we’d love your contributions, feedback, and of course your stars!

Special thanks to Noam Gal, Oren Gurfinkel, Roi Kramer, and Itai Gendler for their hard work to make this project a reality and of course from all the wonderful maintainers of the Argo project. Learn more about Argo CD.