At Codefresh we understand how much Kubernetes has changed the local flow for developers and we have already covered not only local Kubernetes distributions for Mac, Windows and Linux, but also specialized tools such draft, skaffold, garden.io and tilt.dev that are focused on developers who work with Kubernetes.

This time we will look at Okteto, a brand new tool that aims to make developers more productive when using Kubernetes.

If you are already familiar with most tools in this space you can think of Okteto as the union of different ideas from Skaffold and telepresence while also putting into the mix a developer Docker registry, a remote build service, an easy way to deploy docker images without manifests and much more.

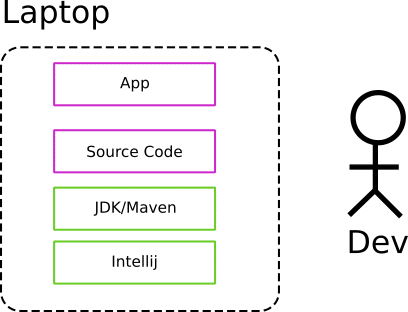

The traditional way of developing applications locally

Before Kubernetes (and microservices), setting up a development environment was most times a straightforward process. A developer would install their favorite IDE (such as Intellij, or Visual studio code) along with the runtime of their favorite programming language (e..g. Java, Python, Ruby etc).

This made the basic development cycle very simple as one could make some changes within the source code and see the effect right away.

Developers that used dynamic/scripted languages also enjoyed the benefit of instant feedback as there was no intermediate compilation step needed compared to compiled languages.

This application workflow is the ideal for developers as:

- Setting up the development environment is easy

- Changes to source code can be evaluated in a matter of seconds

- The application runs locally on the laptop/workstation so no network resources are needed

- Testing, profiling, debugging and other associated developer activities also take place in the same environment with the native tools of the platform.

Now, of course even if this is the best case scenario, in real life there are some more concerns here such as choosing the correct version of the dev environment (e.g. JDK, Node.js/NPM) and in some cases an intermediate runtime (such as an application server in the case of Java or PHP), but in general, developers were happy with the feedback loop they gained with this approach.

Remember also that we are talking about local development BEFORE a commit takes place (i.e. while the developer is still implementing the features). Once a commit happens, the CI/CD system takes over and performs all the required verification actions, a process that might take some extra minutes.

But for the basic cycle of code-> test-> run developers are accustomed to spend only some seconds during the implementation phase of a feature (before the CI/CD process).

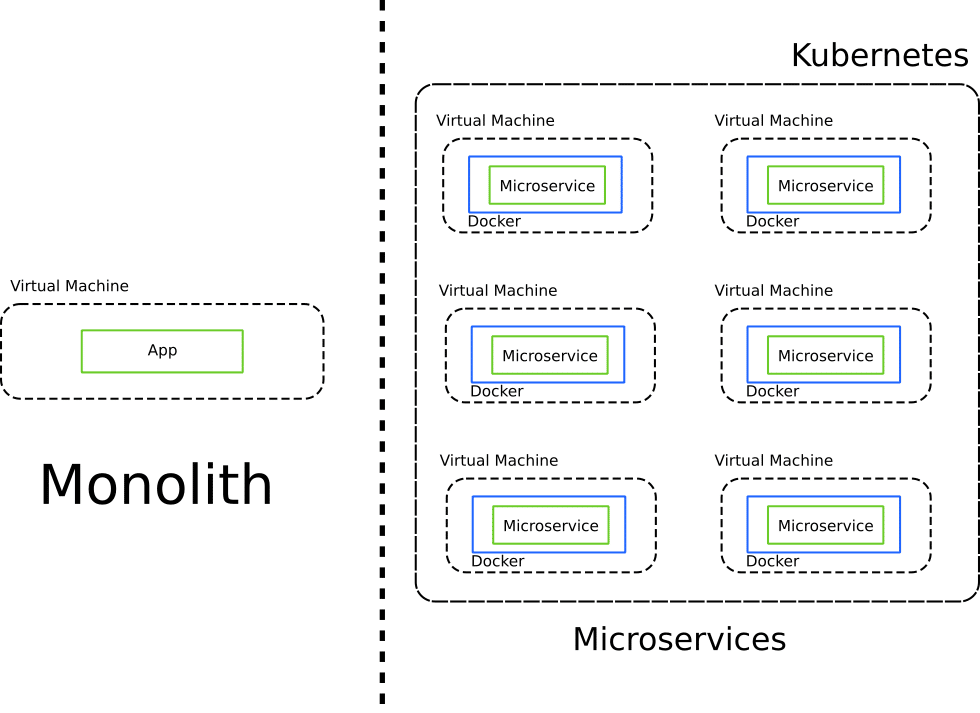

Developing locally in the era of Kubernetes

With the emergence of Kubernetes as the defacto clustering standard, and the rise of microservices as the preferred software architecture all software teams had to change their workflows and habits.

In the case of developers, the complexity of setting a local development environment greatly increased, as two more layers (Docker and Kubernetes) were now added into the mix.

The addition of Docker and Kubernetes not only makes deploying the application more complex, but also hinders local development in the worst possible ways:

- All native hot-reload and auto-refresh tools cannot be used anymore since the code is now in a docker image

- Incremental compilation and cache reuse is now a non-trivial task

- Debugging, tracing/logging and testing tools are no longer available as code is not running on its own anymore and ports are redirected/masked inside Kubernetes services

- All developer tools that are based on SSH functionality are no longer applicable as Kubernetes deployments are based on Docker images instead of VMs

Any development team that adopts containers and Kubernetes typically resorts to the following 3 approaches for local development:

- Use Docker and Docker-compose locally

- Use Docker and a local Kubernetes cluster such as minikube, microk8s, etc.

- Use Docker locally and a remote Kubernetes cluster for developers.

Let’s examine these approaches in turn.

Using Docker-compose

Docker compose was originally conceived as a tool specifically for local development with containers. The idea is simple. It allows you to launch one or more containers locally with their own network and “mimic” how an application would look like in Kubernetes.

The simplicity of Docker compose is one of its major strengths. Only Docker needs to be installed on the workstation and nothing else. It is also a great way to reuse the native hot-reload tools of your programming language as with the proper use of volumes these fast-reload mechanisms are still possible.

The big problem of course is that running Docker compose is nothing like a Kubernetes cluster. Just because your application works on Docker compose, doesn’t mean it will also work on the production Kubernetes cluster. This just converts the problem of “works on my machine” to “works on my Docker compose installation”.

In summary the pros of Docker compose are:

- Very simple installation and management

- Docker compose is fairly easy to understand if you already have Docker knowledge

- Volumes can help with all the hot-reload tools and mechanisms

- You can use the same IDE and tools for local development as before

- If your laptop has enough capacity you can run the full application locally without any extra network resources

The disadvantages are:

- Running an application in Docker compose is not the same as running it in a Kubernetes cluster

- Specialized resources (e.g. GPU nodes) are not available locally

- Kubernetes APIs are not available locally

- Extra infrastructure (e.g. configmaps and secrets) is not available

- You need to handle two different sets of manifest (for Kubernetes and for Docker compose) and keep them in sync

The first disadvantage is essentially a big blocker when it comes to local development. Several production issues are caused because of differences between the production and staging environments. Using Docker compose for development and Kubernetes for production deployments is the embodiment of disparity between development and production.

Trying to use docker-compose to mimic a Kubernetes environment is a losing battle. There are valid cases for Docker-compose as far as developers are concerned, but using it as the sole development tool is not advised for any non-trivial microservice application.

Using a local Kubernetes cluster

The second approach is to launch a local Kubernetes cluster on your laptop. This is the natural response of developers as this is what we have already been doing with traditional applications. If for example an application is destined to be deployed on a Java application server or Apache httpd, developers would install the same thing locally (making sure that the exact same version is used).

Following a similar approach with Kubernetes is much more complex though. The biggest problem here is that while there are several local Kubernetes distributions to choose from, getting an application deployed to a cluster is a non-trivial process.

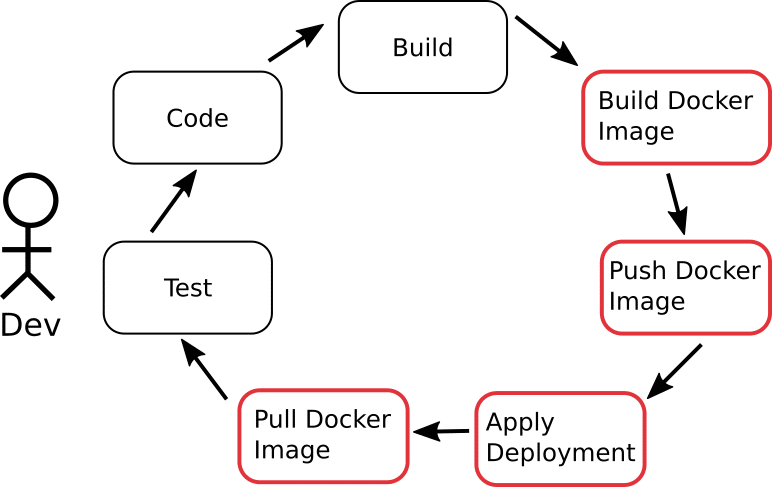

All the stages of the basic developer cycle of code->build->test are now completely broken:

Stages shown in red, not only take time to finish (often minutes), but also are completely unrelated to the daily workflow of a developer, adding no extra value to feature development.

Seeing the result of your changes is now a lengthy process and what used to take seconds now takes minutes. And while a local Kubernetes distribution does not require as much knowledge as a production instance, developers are still forced to learn about Kubernetes concepts (such as deployments and services) which are completely unneeded for their main goal (which is to write code and deliver features).

Testing and debugging is also a much more complex process and special tools need to be used in order to access the Kubernetes cluster.

In summary, using a local Kubernetes cluster is great for gaining Kubernetes knowledge, but there are much better solutions for developers as we will see with Okteto in the next sections.

The advantages of using a local cluster are:

- The development environment is now much closer to production

- Kubernetes API and services (e.g. config-maps) are available

- No network resources are needed if the whole application can run on the laptop

The disadvantages are:

- The main feedback loop is unnecessarily long

- Specialized resources (e.g. GPU nodes) are not available locally

- Launching the whole application might be impossible because of resource consumption

- Developers are forced to learn about Kubernetes managements and APIs

- Debugging/testing tools and other IDE facilities cannot be used

- Hot-reload of code is not available

- The local cluster is still not the same as the production one

- The local cluster will still need support from the Operator/IT team. Running a local Kubernetes cluster will still require some setup and maintenance.

It is important to note that some tools (such as Skaffold and Draft) can help with the feedback loop, but some major disadvantages such as the resource consumption and the disparity between production and development still persist.

Using a Remote Kubernetes cluster

The third approach is to use a remote cluster which is very close to production (or even the same one as production)

While this approach sounds very good in theory, in practice, the tools that make it work efficiently have only recently appeared (garden.io, tilt.dev and okteto).

The advantages of using a remote cluster are:

- The development environment is the same as production

- Kubernetes APIs and services are available

- Specialized resources (e.g. GPU nodes) are available

- The whole application can be run on the cluster regardless of local resource on your laptop

- All developers use the exact same development environment which is completely unrelated to the OS of each laptop.

The disadvantages are mostly the same as the ones mentioned in the previous section regarding local clusters. The big blocker is the long feedback loop and lack of support for hot-reloads and special IDE/debug facilities.

The importance of developing in an environment as close to production cannot be overstated. This advantage is so beneficial that until recently overshadowed everything else and makes the experience of developers really cumbersome when trying to use remote clusters.

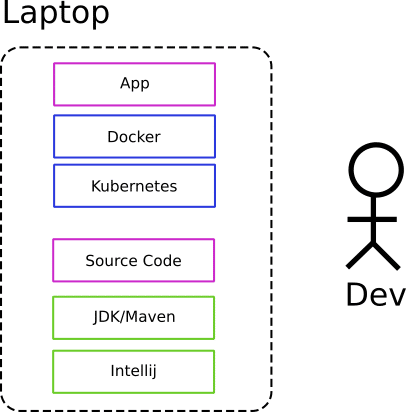

Another big benefit is that you are reducing the configuration surface of the developer’s machine. Less stuff to install and keep updated, less software to maintain, and less things to onboard when a new person joins a team.

Okteto also follows this approach and is designed specifically for enabling development and their teams to operate efficiently with the remote cluster model.

How Okteto works

Now that we have seen all the issues with Kubernetes development, let’s see how Okteto solves them.

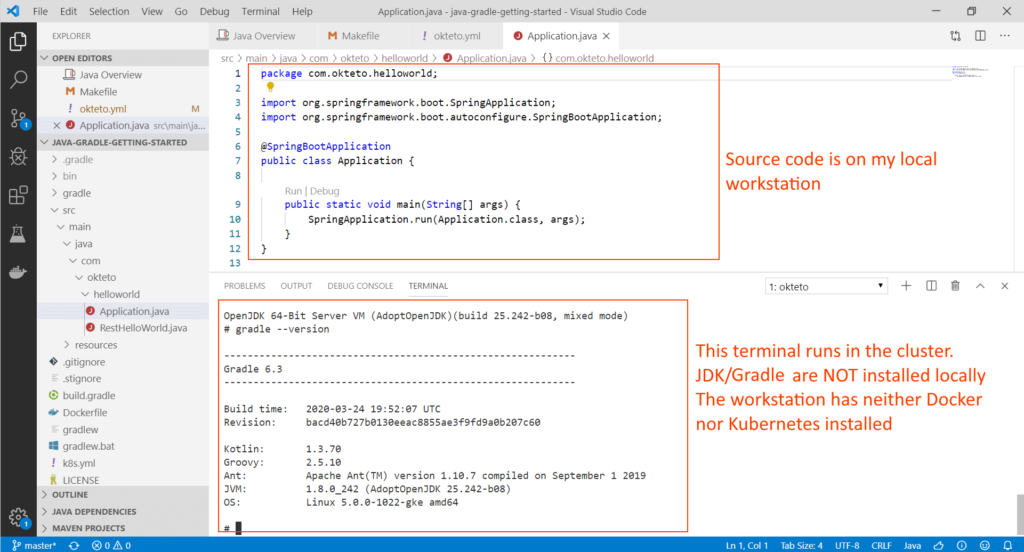

The Okteto CLI is open source and packaged as a single executable that works in the same manner for Mac, Windows, Linux. All the magic happens when you run “okteto up” inside the folder of your source code.

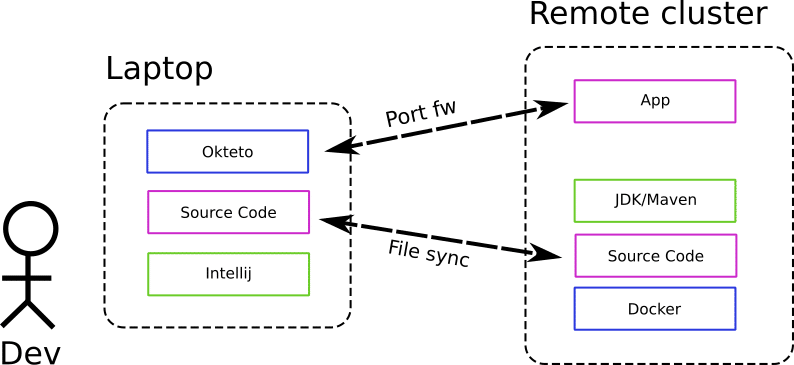

Once you run this command okteto does the following:

- Connects to your remote cluster

- Goes to the deployment that has your application and changes the docker image with a different one that contains your development environment (e.g. maven and jdk, or npm, python, ruby etc) along with all your test tools (and optionally visual studio code integrations)

- Setups port-forwarding in both directions both for your app and for your debugging tools

- Setups automatic file synchronization in both directions so that everything you do locally is passed to the cluster and vice versa.

- Setups a tiny SSH server in the pod to make it easy to work with SSH based tools (i.e. remote workspaces in Visual studio Code)

The end result if the remote cluster is seen by your IDE and tools as a local filesystem/environment. You edit your code normally on your workstation and as soon as you save a file, the change goes to the remote cluster and your app instantly updates (taking advantage of any hot-reload mechanism you already have). This whole process happens in an instant. No docker images need to be created any more and no Kubernetes manifests need to be applied to the cluster. All that is taken care of by okteto.

At the same time, if you want to view what your application is doing you can simply go to http://localhost:8080 (or any other port that you normally use) and it will just work. Behind the scenes okteto will forward your traffic to the actual Kubernetes cluster and the correct port of the docker container that has your application environment.

This means that debugging with okteto is trivial. You simply use your local IDE and connect to your “local” port that you already use for debugging purposes.

It is important to notice that other than the CLI, okteto doesn’t have any other dependencies on your local workstation. You don’t need to have Docker or Kubernetes installed on your laptop. You don’t even need a runtime (e.g. JDK, npm, python etc) on your laptop as well. All the programming tools are in the Docker image deployed on the cluster.

The exact image that you use for development (along with other options such as what port to forward) are defined in a very simple yaml file called okteto.yml which is located in your source folder. You don’t need to create this file from scratch as the command “okteto init” will create one for you (and it will even try to autodetect your programming language).

Okteto has great documentation and you can also find specific guides for your favorite programming language such Java, Node, Python, Ruby etc.

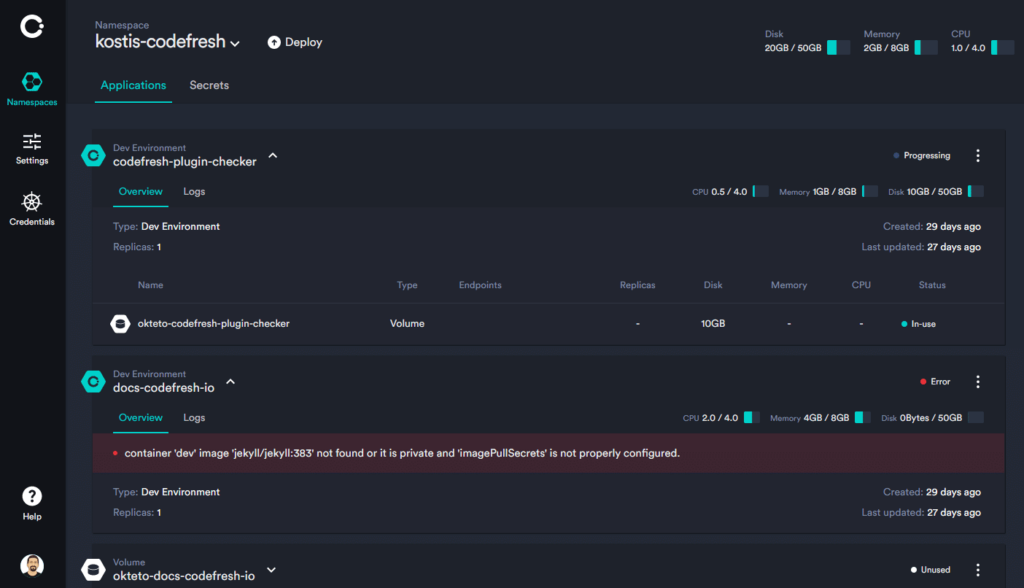

Okteto cloud and other features

Using Okteto for local development is also possible even if you don’t have your own cluster. Okteto Cloud is a SAAS version of a Kubernetes hosted service with some extra features such as

- Your own namespaces to be used for different applications

- A remote Docker build service

- A Docker registry

- Automatic SSL endpoints for your deployments

- A dashboard to inspect to inspect your Kubernetes cluster in a way that is useful for developers

- A catalog of applications in the form of Helm charts (and the ability to add your own charts)

There is also a hosted version – Okteto enterprise that companies can use on their own premises instead of Okteto Cloud. However note that the Okteto CLI works with the standard Kubernetes API and is fully open source, so you can use it on your own cluster without any restrictions.

The main goal of the Okteto team is to completely abstract Kubernetes as far as development is concerned. Developers should not have to deal with Kubernetes manifests or have deep cluster knowledge when they just want to write code and implement features.

To this purpose, Okteto has several other complementary features for easy local development. First of all the Docker registry of Okteto can be used by the okteto CLI without actually installing Docker on your local machine. With just the okteto CLI you can build and push an image to the Okteto registry providing only a Dockerfile. You can also automatically build and update and existing deployment without the need for Kubernetes manifests.

Okteto has also recently released initial support for stacks. Okteto stacks are yaml definitions that sit between simple docker compose files and Kubernetes manifests. They allow developers to define dependencies of applications, but without the full complexity of Kubernetes manifests.

Conclusion

Okteto is a tool designed specifically for developers who want to work with Kubernetes but without actually being Kubernetes experts. It removes all the friction of developing for containers and Kubernetes without limiting their power.With okteto

- You don’t need Docker or Kubernetes installed on your laptop anymore

- You don’t need to build docker images and create deployments any time you make a source code change

- You still use your favorite IDE and debugging/testing tools that you are accustomed to.

- You can still use incremental builds and hot-reloads as supported by your programming language.

- A remote Kubernetes clusters can be used as a “local” filesystem/environment on your workstation

- You solve the “works on my machine” problem as your application is developed inside the cluster it is destined to be deployed

- You can take advantage of all Kubernetes features and special resources (e.g. GPU nodes) for your application without any extra complexity.

For similar tools see our skaffold/garden article as well as tilt.dev.