What is Kustomize?

Have you always wanted to have different settings between production and staging but never knew how? You can do this with Kustomize!

Kustomize is a CLI configuration manager for Kubernetes objects that leverage layering to preserve the base settings of the application. This is done by overlaying the declarative YAML artifacts to override default settings without actually making any changes to the original manifest.

Kustomize settings are defined in a kustomization.yaml file. Kustomize is also integrated with kubectl. With Kustomize, you can configure raw, template-free YAML files, which allows you to modify settings between deployment and production easily. This enables troubleshooting misconfigurations and keeps use-case-specific customization overrides intact.

Kustomize also allows you to scale easily by reusing a base file across all your environments (development, production, staging, etc.) and then overlay specifications for each.

- **Base Layer**: This layer specifies the most common resources and original configuration.

- **Overlays Layer**: This layer specifies use-case-specific resources by utilizing patches to override other kustomization files and Kubernetes manifests.

Overlays are what help us accomplish our goal by producing variants without templating.

Benefits of Kustomize

Kustomize offers some of the following benefits:

- Reusability

With Kustomize you can reuse one of the base files across all environments (development, staging, production, etc.) and overlay specifications for each of those environments. -

Quick Generation

Since Kustomize doesn’t utilize templates, a standard YAML file can be used to declare configurations. -

Debug Easily

Using a YAML file allows easy debugging, along with patches that isolate configurations, allowing you to pinpoint the root cause of performance issues quickly. You can also compare performance to the base configuration and other variations that are running. -

Kubernetes Native Configuration

Kustomize understands Kubernetes resources and their fields and is not just a simple text templating solution like other tools.

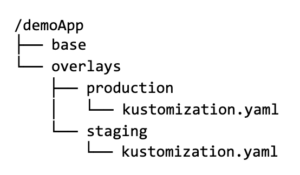

See below an example of a Kustomize file structure including the base and overlays within an application. You can also reference a code-based demo project on GitHub.

The tree structure above is a simple example of how you can deploy a single application to 2 different environments (staging and production). Let’s dig deeper into each directory and create an overlay.

base folder

The base folder holds common resources, such as the deployment.yaml, service.yaml, and configuration files. It contains the initial manifest and includes a namespace and label for the resources.

overlays folder

The overlays folder houses environment-specific overlays, which use patches to allow YAML files to be defined and overlaid on top of the base for any changes. Let’s take a look at a couple of the environments within the overlays folder below that also includes the kustomization.yaml.

kustomization.yaml

Each directory contains a kustomization file, which is essentially a list of resources or manifests that describes how to generate or transform Kubernetes objects. There are multiple fields that can be added, and when this list is injected, the kustomization action can be referenced as an overlay that refers to the base.

Creating Overlays

Let’s explore a simple example of how overlays work.

We’ll make the following changes within our production and staging directories:

- Within the staging overlay, we will enable a risky feature that is NOT enabled in production.

- Within the production overlay, we’ll assign a higher replica count.

We’ll also ensure the web server from the cluster variants is different from one another.

overlays/staging/kustomization.yaml

In the staging directory, let’s make a kustomization defining a new name prefix and different labels.

namePrefix: staging- commonLabels: variant: staging commonAnnotations: note: “Welcome to staging!” bases: - ../../base patchesStrategicMerge: - config-map.yaml

Staging patch

Add a configMap kustomization to change the server greeting from “Hello!” to “Kustomize rules!” We’ll also enable the risky flag.

apiVersion: v1 kind: ConfigMap metadata: name: the-map data: altGreeting: “Kustomize rules!” enableRisky: “true”

overlays/production/kustomization.yaml

Within the production directory, we will make a kustomization with a different name prefix and label.

namePrefix: production- commonLabels: variant: production commonAnnotations: note: “Welcome to production!” bases: - ../../base patchesStrategicMerge: - deployment.yaml

Production patch

We’ll make a production patch that will increase the replica count.

apiVersion: apps/v1 kind: Deployment metadata: name: the-deployment spec: replicas: 10

Now, we can compare these overlays – the kustomizations and patches are required to create noticeable differences between staging and production variants within the Kubernetes cluster.

Based on the changes above, the output would look something like this:

< altGreeting: Kustomize rules! < enableRisky: "true" --- > altGreeting: Hello! > enableRisky: "false" < note: Welcome, I am staging! --- > note: Welcome, I am production! < variant: staging --- > variant: production

You can now see the difference between the staging and production overlays.

Overlays contain a kustomization.yaml, and can also include manifests as new or additional resources, or to patch resources. The kustomization file is what defines how the overlays should be applied to the base and this is what we refer to as a variant.

Each time a change is made to an application, like the example above – it is the overlays that are doing the heavy lifting.

So, now that you have learned a bit about Kustomize, you might be wondering how you can apply the GitOps workflow to it. Below we’ll explain more about GitOps and how you can apply it to your Kustomize project and deploy it.

GitOps works with all your existing tools

What is GitOps?

Now, let’s learn more about how to apply GitOps to your Kustomize application deployment!

GitOps is a paradigm that incorporates best practices applied to both an application development workflow and the infrastructure of a system. This is done to empower organizations and developers to operate their systems from a single source of truth enabled by Git.

A couple of key aspects of GitOps that most aren’t as familiar with and are crucial are the:

- GitOps controller

The controller reads the declarative configuration and uses the reconciliation loops to converge the desired end state. This controller detects the differences between states and makes the necessary changes to maintain the desired state.

- Automation reconciliation loop

The concept of the reconciliation loop is used to keep the state as defined within a manifest. This is such an important principle for the GitOps paradigm because these loops are what drive the entire cluster to the desired state in Git.

Now that you have a basic understanding of what both Kustomize and GitOps are and what some of their capabilities are, perhaps you’ve considered applying GitOps to your existing or future application and infrastructure workflow.

We’ll explore 2 approaches on how to use Kustomize with or without GitOps:

- Using only Kustomize

- Using Kustomize with ArgoCD

What is ArgoCD?

ArgoCD is a GitOps controller specifically created for Kubernetes deployments. It supports a variety of configuration management tools like Helm (take a look at our documentation for more information), Ksonnet, Kustomize, etc. The core component is the Application Controller, which continuously monitors any running applications and compares the live state against the desired state defined in a Git repository.

If a deployed application whose live state drifts from the target state, ArgoCD is then considered OutOfSync. It then provides reports and visualizations to identify these changes and can provide automation when any modifications are made so that the target environments reflect the desired state of a system in Git.

If you’d like to explore these different deployment approaches with Kustomize from a code-based perspective, here is an example project that you can follow along with on GitHub. However, we will explore both of these approaches below with some more details.

Approach #1: Deploying only with Kustomize

If you want to use Kustomize, but your organization isn’t ready to implement GitOps with your workflow, you can still use Kustomize on its own.

Install Kustomize

First, install Kustomize and this can be done either by utilizing kubectl version 1.14 or later, otherwise you can install based on your operating system and reference the Kustomize documentation.

Create a base directory

Next, in order to deploy an application with Kustomize, you need a kustomization.yaml file, and this is added to the base directory.

The kustomization.yaml file specifies what resources to manage due to the complexity of multiple resource types and different environments when handling configuration files for Kubernetes.

Within the base directory, there is also a service and deployment resource.

Create an Overlays directory

Next, you’ll want to create an overlays directory, and this directory is what allows you to kustomize the base and apply any changes with a patch to modify a resource.

The overlays still use the same resources as the base but may vary in the number of replicas in a deployment, the CPU for a specific pod, or the data source used in the ConfigMap, etc.

Within our example, we have a staging and production overlay. These overlay directories contain the kustomizations and patches previously mentioned that are required to create distinct staging and production variants in a cluster. However, with Kustomize you can use the overlays for anything needed to organize environments, whether it’s based on location: USA, Asia, Europe or internal/external, team A/team B, etc.

Kustomize is essentially an overlay-based engine that functions by finding and replacing specific sections in the manifest and replacing it with required fields and values. These values are then merged and deployed!

Create a namespace for specific environments

kubectl create ns staging

or

kubectl create ns production

Build and Deploy to environments

You can then apply the overlays to your cluster and deploy with the command:

kubectl apply -k overlays/staging

or

kubectl apply -k overlays/production

You are now successfully using Kustomize to manage your Kubernetes configurations and deploy your application to production and staging environments!

Approach #2: Deploying using Kustomize with GitOps

In the previous approach, you learned how to deploy an application using only Kustomize, so let’s see how it fits into ArgoCD and how it can be used in a GitOps workflow.

ArgoCD supports Kustomize and has the ability to read a kustomization.yaml file to enable deployment with Kustomize and allow ArgoCD to manage the state of the YAML files.

ArgoCD monitors the resources within the git repository for any changes, ensuring that the live state of your system matches the desired state. Each time customization is made, ArgoCD detects those modifications and updates the deployment.

So, let’s begin walking through the process to deploy a Kustomize project using ArgoCD!

Install ArgoCD and access a Kubernetes Cluster

First, we need to ensure the Kubernetes cluster is set up and you are logged into ArgoCD so that these resources are provided and can be deployed. You can use any Kubernetes cluster and install the argocd CLI.

Once you’ve accessed the argocd CLI you can access the ArgoCD server and log in to the ArgoCD UI. However, if you’re more of a terminal fan, you can also deploy the application through the argocd CLI. Feel free to reference the demo application that will walk you through the deployment process through your terminal.

Create an ArgoCD application

Now, we can set up the Kustomize Project. Similar to using Helm with GitOps, we will approach this deployment the same way within the UI by creating an ArgoCD application.

Let’s begin! First, click on the +NEW APP and include the name of the ArgoCD application, select the default project, and enable the Automatic SYNC POLICY.

Next, when adding your Kustomize project it helps to include a specific Path to segregate the application manifests inside the Git repository. We can then ask ArgoCD to only read the specific directories in the repository and read manifests within that path.

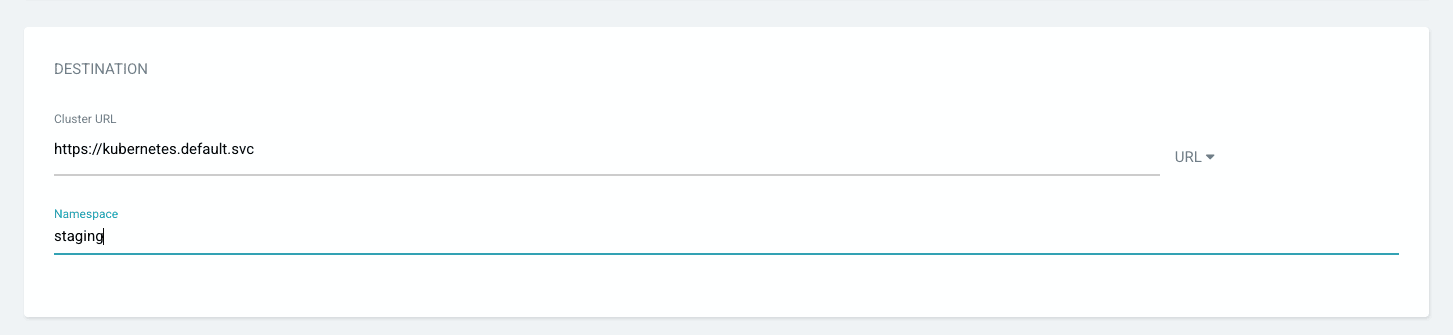

Then, within the Destination section, you need to provide the destination of the Kubernetes cluster details. Also, make sure to click on the checkbox for auto-create namespace when adding the input field value, or you can add a custom namespace you’ve created prior.

ArgoCD will read the kustomization.yaml file in the path you provided and prompt you to allow override with different values.

Synchronize Application and Deploy

Assuming you enabled auto-synchronization when creating your ArgoCD application, it will read the parameters and the Kubernetes manifests. Then, once the manifests are applied, you can review the application health and resources you deployed.

If your application has an error when trying to synchronize, you can execute the argocd history command, allowing you to view the application deployment history to identify a possible error:

argocd app history

If you need to rollback you can do so by executing this command:

argocd app rollback

These commands leverage a faster and more secure deployment by enabling the tracking from the Git repository. This allows you to track the active Kubernetes resources and events. These actions can also be done within the UI.

Once the application is healthy and synchronized, each time you create a new kustomization.yaml file and the file changes, ArgoCD will be able to detect those changes and make updates to your deployment. You can even mention specific Kustomize tags and set up custom build options for your Kustomize build.

Summary

Kustomize allows you to use different configurations of a base Kubernetes manifest. Within this post, we’ve covered the Kustomize basics and how to deploy using just Kustomize and deploying with GitOps. This allows you to leverage the power of Kustomize to define the Kubernetes files without using a templating system.

To start deploying your Kustomize application, sign up for a Codefresh account today to apply GitOps to your deployment process!